Technology

Meta’s Oversight Board Calls for Major Overhaul of Deepfake Detection

Concerns over the effectiveness of Meta’s deepfake detection system have surfaced following a recent assessment from the company’s Oversight Board. The board concluded that Meta’s current methods are inadequate for addressing the increasing sophistication of AI-generated content. This evaluation comes in the wake of a troubling incident involving a deepfake video that misrepresented damage in Israel, raising alarms about the potential for misinformation during critical events.

The Oversight Board, which serves as a semi-independent body aimed at guiding moderation practices, emphasized that the existing framework lacks the necessary depth and speed to combat the rapidly evolving landscape of online misinformation. The board’s findings highlight the urgency of enhancing detection systems as misinformation can spread quickly across platforms like Facebook, Instagram, and Threads.

The investigation focused on a specific incident where an AI-generated video falsely depicted destruction in Israel. This content circulated widely before being identified as misleading. The board underscored the dangers of such misinformation, particularly during conflicts when individuals rely on social media for timely updates.

One significant shortcoming identified by the board is Meta’s heavy reliance on self-disclosure from content creators. Currently, the platform depends largely on creators to acknowledge their use of AI or on industry standards like C2PA, which embeds metadata within digital files. Unfortunately, most misleading content does not come with these markers, making it challenging for users to discern between fact and fiction. The board noted that even Meta’s own AI tools are inconsistently labeled, contributing to user confusion.

Recommendations for a Proactive Approach

In light of these findings, the Oversight Board has proposed a comprehensive overhaul of how Meta handles synthetic media. Their recommendations advocate for a shift toward a more proactive stance regarding AI-generated content. Specifically, they call for the development of advanced internal tools that can automatically flag “High-Risk AI” content without waiting for user reports. The board also urged the establishment of a dedicated community standard for AI-generated media, aiming to replace the current inconsistent set of guidelines.

Speed is critical in this context. During periods of conflict, a fabricated video can achieve viral status and reach millions within hours. By the time a human moderator or fact-checker intervenes, the narrative may already be skewed. The board argued that Meta must enhance transparency regarding penalties for policy violations and ensure that labeling is clear and accessible to all users.

Although the Oversight Board’s recommendations are not legally binding, they carry significant influence. Meta now faces a pivotal decision about how much to invest in improving the authenticity and reliability of its platforms.

As the challenges of misinformation continue to grow, the pressure is on Meta to adapt swiftly and effectively in order to safeguard the integrity of the information shared across its social media platforms.

-

Education7 months ago

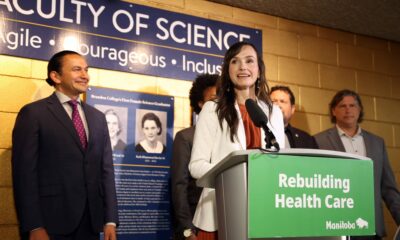

Education7 months agoBrandon University’s Failed $5 Million Project Sparks Oversight Review

-

Science8 months ago

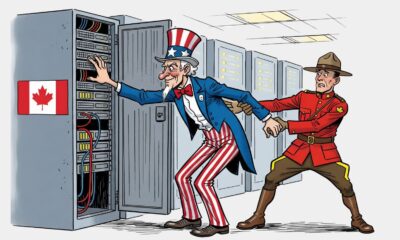

Science8 months agoMicrosoft Confirms U.S. Law Overrules Canadian Data Sovereignty

-

Lifestyle7 months ago

Lifestyle7 months agoWinnipeg Celebrates Culinary Creativity During Le Burger Week 2025

-

Lifestyle4 months ago

Lifestyle4 months agoDiscover Aritzia’s Latest Fashion Trends: A Comprehensive Review

-

Education8 months ago

Education8 months agoNew SĆIȺNEW̱ SṮEȽIṮḴEȽ Elementary Opens in Langford for 2025/2026 Year

-

Business4 months ago

Business4 months agoEngineAI Unveils T800 Humanoid Robot, Setting New Industry Standards

-

Health8 months ago

Health8 months agoMontreal’s Groupe Marcelle Leads Canadian Cosmetic Industry Growth

-

Science8 months ago

Science8 months agoTech Innovator Amandipp Singh Transforms Hiring for Disabled

-

Technology8 months ago

Technology8 months agoDragon Ball: Sparking! Zero Launching on Switch and Switch 2 This November

-

Technology3 months ago

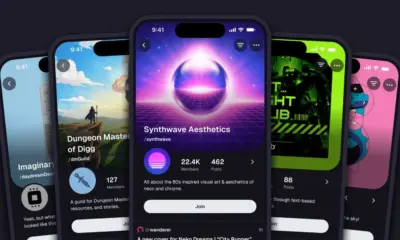

Technology3 months agoDigg Relaunches as Founders Kevin Rose and Alexis Ohanian Join Forces

-

Lifestyle4 weeks ago

Lifestyle4 weeks agoCanmore’s Le Fournil Bakery to Close After 14 Successful Years

-

Top Stories4 months ago

Top Stories4 months agoCanadiens Eye Elias Pettersson: What It Would Cost to Acquire Him

-

Health7 months ago

Health7 months agoEganville Leader to Close in 2026 After 123 Years of Reporting

-

Education8 months ago

Education8 months agoRed River College Launches New Programs to Address Industry Needs

-

Top Stories4 months ago

Top Stories4 months agoNicol Brothers Shine as Wheat Kings Dominate U18 AAA Hockey

-

Business8 months ago

Business8 months agoBNA Brewing to Open New Bowling Alley in Downtown Penticton

-

Business7 months ago

Business7 months agoRocket Lab Reports Strong Q2 2025 Revenue Growth and Future Plans

-

Education6 months ago

Education6 months agoAlberta Petition Aims to Redirect Funds from Private to Public Schools

-

Lifestyle5 months ago

Lifestyle5 months agoEdmonton’s Beloved Evolution Wonderlounge Closes, New Era Begins

-

Education8 months ago

Education8 months agoAlberta Teachers’ Strike: Potential Impacts on Students and Families

-

Technology6 months ago

Technology6 months agoDiscord Faces Serious Security Breach Affecting Millions

-

Technology8 months ago

Technology8 months agoGoogle Pixel 10 Pro Fold Specs Unveiled Ahead of Launch

-

Business8 months ago

Business8 months agoIconic Golden Lion Restaurant in South Surrey to Close After 50 Years

-

Lifestyle6 months ago

Lifestyle6 months agoCanadian Author Secures Funding to Write Book Without Financial Strain