Science

Trust in AI for Moral Decisions Remains Elusive, Study Finds

Despite the rapid advancements in artificial intelligence (AI), a recent study highlights significant hurdles in trusting AI systems to make moral decisions. Researchers from the University of Kent conducted an investigation into public perceptions of Artificial Moral Advisors (AMAs), revealing that most individuals remain skeptical about the ethical guidance provided by AI.

Study Reveals Distrust in AI’s Moral Judgement

The research, published in the journal Cognition, illustrates that while AMAs could potentially offer impartial advice, people are hesitant to rely on them for guidance in ethical dilemmas. The study found that individuals displayed a marked preference for human advisors over AI, even when the recommendations were identical. This skepticism was especially pronounced when advice was based on utilitarian principles, which prioritize actions that benefit the majority.

Participants expressed a greater level of trust in advisors who adhered to non-utilitarian moral rules, particularly in scenarios involving direct harm to individuals. This suggests a deeper value placed on human-centric principles over abstract outcomes, indicating a fundamental challenge in the integration of AI into morally sensitive domains.

Dr. Tim Sandle, a microbiologist and editor at Digital Journal, emphasized the importance of understanding this inherent skepticism. “Trusting AI in moral contexts is not solely about its accuracy,” he noted. “It also hinges on how well AI aligns with human values and expectations.” Even when participants agreed with an AI’s decision, they anticipated future disagreements, reflecting a consistent wariness toward AI’s ethical reasoning.

AI’s Amplification of Human Biases

One of the key issues identified in the study is the tendency of AI systems to inherit and amplify human biases. These biases not only skew the recommendations made by AI but can also influence the users themselves, creating a feedback loop that exacerbates existing prejudices. For instance, systems like ChatGPT can develop biases akin to those observed in humans, often displaying favoritism toward certain groups while alienating others.

The implications of these findings are significant as AI continues to evolve in its capabilities and applications. As AMAs are designed to assist in moral decision-making, it is crucial that developers and policymakers address these biases to foster greater acceptance among users. Understanding how people perceive and trust AI’s moral guidance will be essential for future advancements in this area.

In conclusion, while the potential for AI to contribute to ethical decision-making exists, significant barriers to trust and acceptance remain. The research underscores the need for ongoing dialogue between technologists and the public to bridge the gap between human values and AI’s evolving role in moral contexts.

-

Education7 months ago

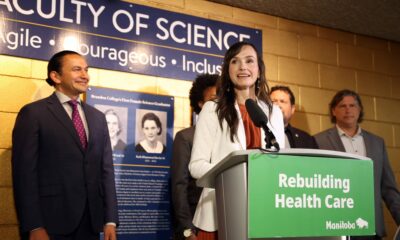

Education7 months agoBrandon University’s Failed $5 Million Project Sparks Oversight Review

-

Science8 months ago

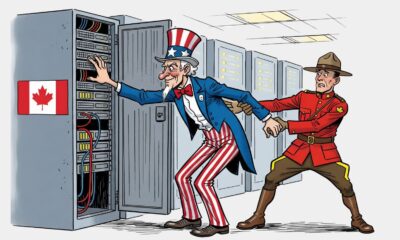

Science8 months agoMicrosoft Confirms U.S. Law Overrules Canadian Data Sovereignty

-

Lifestyle4 months ago

Lifestyle4 months agoDiscover Aritzia’s Latest Fashion Trends: A Comprehensive Review

-

Lifestyle8 months ago

Lifestyle8 months agoWinnipeg Celebrates Culinary Creativity During Le Burger Week 2025

-

Education8 months ago

Education8 months agoNew SĆIȺNEW̱ SṮEȽIṮḴEȽ Elementary Opens in Langford for 2025/2026 Year

-

Business4 months ago

Business4 months agoEngineAI Unveils T800 Humanoid Robot, Setting New Industry Standards

-

Health8 months ago

Health8 months agoMontreal’s Groupe Marcelle Leads Canadian Cosmetic Industry Growth

-

Science8 months ago

Science8 months agoTech Innovator Amandipp Singh Transforms Hiring for Disabled

-

Technology8 months ago

Technology8 months agoDragon Ball: Sparking! Zero Launching on Switch and Switch 2 This November

-

Lifestyle1 month ago

Lifestyle1 month agoCanmore’s Le Fournil Bakery to Close After 14 Successful Years

-

Technology3 months ago

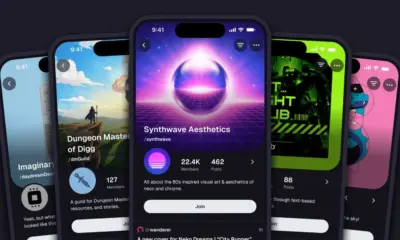

Technology3 months agoDigg Relaunches as Founders Kevin Rose and Alexis Ohanian Join Forces

-

Top Stories4 months ago

Top Stories4 months agoCanadiens Eye Elias Pettersson: What It Would Cost to Acquire Him

-

Health7 months ago

Health7 months agoEganville Leader to Close in 2026 After 123 Years of Reporting

-

Education8 months ago

Education8 months agoRed River College Launches New Programs to Address Industry Needs

-

Top Stories4 months ago

Top Stories4 months agoNicol Brothers Shine as Wheat Kings Dominate U18 AAA Hockey

-

Business8 months ago

Business8 months agoBNA Brewing to Open New Bowling Alley in Downtown Penticton

-

Lifestyle5 months ago

Lifestyle5 months agoEdmonton’s Beloved Evolution Wonderlounge Closes, New Era Begins

-

Business7 months ago

Business7 months agoRocket Lab Reports Strong Q2 2025 Revenue Growth and Future Plans

-

Education6 months ago

Education6 months agoAlberta Petition Aims to Redirect Funds from Private to Public Schools

-

Education8 months ago

Education8 months agoAlberta Teachers’ Strike: Potential Impacts on Students and Families

-

Technology6 months ago

Technology6 months agoDiscord Faces Serious Security Breach Affecting Millions

-

Technology8 months ago

Technology8 months agoGoogle Pixel 10 Pro Fold Specs Unveiled Ahead of Launch

-

Business8 months ago

Business8 months agoIconic Golden Lion Restaurant in South Surrey to Close After 50 Years

-

Lifestyle6 months ago

Lifestyle6 months agoCanadian Author Secures Funding to Write Book Without Financial Strain