Science

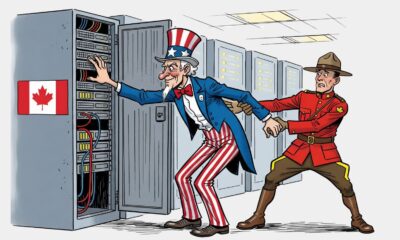

Canadian Spy Watchdog Investigates AI Use in National Security

Canada’s National Security and Intelligence Review Agency is initiating a comprehensive examination of the role and governance of artificial intelligence (AI) within national security operations. This review aims to evaluate how Canadian security agencies define, utilize, and regulate AI technologies amid growing concerns about their implications for privacy and civil liberties.

The agency has informed several federal ministers and security organizations about this initiative. In a letter addressed to key officials, including Prime Minister Mark Carney and ministers responsible for digital innovation and public safety, review agency chair Marie Deschamps emphasized that the findings will offer critical insights into the current use of AI tools and highlight potential risks that may require further scrutiny.

The scope of the review encompasses various applications of AI in national security, ranging from translation services to malware detection. The agency’s statutory mandate allows it access to all information held by departments and security agencies, including classified materials, with certain exceptions. The letter specifies that the review may involve document requests, briefings, interviews, and even independent inspections of specific technical systems.

Security agencies such as the Canadian Security Intelligence Service (CSIS), the Royal Canadian Mounted Police (RCMP), and the Communications Security Establishment (CSE) have been included in this examination. Additionally, agencies not typically associated with security, like the Canadian Food Inspection Agency and the Public Health Agency of Canada, have also received the letter, indicating the broad scope of the investigation.

In response to inquiries about the review, the RCMP expressed its support for external evaluations of national security and intelligence operations. “The RCMP believes that establishing transparent and accountable external review processes is critical to maintaining public confidence and trust,” the organization stated in a media release.

In 2024, a report from the National Security Transparency Advisory Group urged Canadian security agencies to provide detailed accounts of their AI systems and intended applications. The report projected an increasing dependency on AI for processing large volumes of text and images, recognizing patterns, and interpreting behavior trends. While CSIS and CSE acknowledged the necessity for transparency regarding AI, they pointed out limitations on what could be shared publicly due to security protocols.

The federal government’s principles for AI usage stress the importance of openness about how and why AI is employed, alongside proactive risk assessment to protect legal rights and democratic norms. Training public officials on the ethical and operational implications of AI, including issues related to privacy and security, is also a key component of these principles.

In its latest annual report, CSIS indicated it was rolling out AI pilot programs consistent with the government’s guiding principles. The RCMP has outlined several factors to ensure that AI is employed in a legal, ethical, and responsible manner. These considerations include designing systems to prevent bias, maintaining privacy during data analysis, and ensuring transparency in AI decision-making processes.

The CSE’s AI strategy highlights its commitment to developing innovative capabilities to address complex challenges through responsible AI deployment. CSE chief Caroline Xavier emphasized the importance of a thoughtful approach to AI adoption, stating, “We will always be thoughtful and rule-bound in our adoption of AI, keeping responsibility and accountability at the core of how we will achieve our goals.”

As this review unfolds, it may pave the way for clearer guidelines on the use of AI in national security, ensuring that Canadian agencies prioritize ethical considerations while leveraging advanced technologies. The findings from this investigation will likely inform future policy decisions and regulatory frameworks surrounding AI in the security sector.

This report was first published on January 1, 2026, by The Canadian Press.

-

Education7 months ago

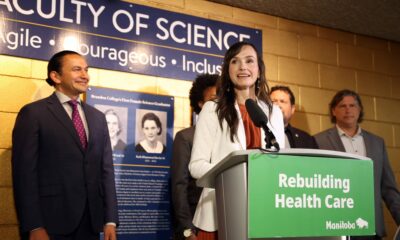

Education7 months agoBrandon University’s Failed $5 Million Project Sparks Oversight Review

-

Science8 months ago

Science8 months agoMicrosoft Confirms U.S. Law Overrules Canadian Data Sovereignty

-

Lifestyle7 months ago

Lifestyle7 months agoWinnipeg Celebrates Culinary Creativity During Le Burger Week 2025

-

Lifestyle4 months ago

Lifestyle4 months agoDiscover Aritzia’s Latest Fashion Trends: A Comprehensive Review

-

Education7 months ago

Education7 months agoNew SĆIȺNEW̱ SṮEȽIṮḴEȽ Elementary Opens in Langford for 2025/2026 Year

-

Business4 months ago

Business4 months agoEngineAI Unveils T800 Humanoid Robot, Setting New Industry Standards

-

Health8 months ago

Health8 months agoMontreal’s Groupe Marcelle Leads Canadian Cosmetic Industry Growth

-

Science8 months ago

Science8 months agoTech Innovator Amandipp Singh Transforms Hiring for Disabled

-

Technology8 months ago

Technology8 months agoDragon Ball: Sparking! Zero Launching on Switch and Switch 2 This November

-

Technology3 months ago

Technology3 months agoDigg Relaunches as Founders Kevin Rose and Alexis Ohanian Join Forces

-

Top Stories4 months ago

Top Stories4 months agoCanadiens Eye Elias Pettersson: What It Would Cost to Acquire Him

-

Lifestyle4 weeks ago

Lifestyle4 weeks agoCanmore’s Le Fournil Bakery to Close After 14 Successful Years

-

Health7 months ago

Health7 months agoEganville Leader to Close in 2026 After 123 Years of Reporting

-

Education8 months ago

Education8 months agoRed River College Launches New Programs to Address Industry Needs

-

Top Stories4 months ago

Top Stories4 months agoNicol Brothers Shine as Wheat Kings Dominate U18 AAA Hockey

-

Business7 months ago

Business7 months agoRocket Lab Reports Strong Q2 2025 Revenue Growth and Future Plans

-

Business8 months ago

Business8 months agoBNA Brewing to Open New Bowling Alley in Downtown Penticton

-

Education6 months ago

Education6 months agoAlberta Petition Aims to Redirect Funds from Private to Public Schools

-

Education8 months ago

Education8 months agoAlberta Teachers’ Strike: Potential Impacts on Students and Families

-

Technology6 months ago

Technology6 months agoDiscord Faces Serious Security Breach Affecting Millions

-

Technology8 months ago

Technology8 months agoGoogle Pixel 10 Pro Fold Specs Unveiled Ahead of Launch

-

Lifestyle5 months ago

Lifestyle5 months agoEdmonton’s Beloved Evolution Wonderlounge Closes, New Era Begins

-

Business7 months ago

Business7 months agoIconic Golden Lion Restaurant in South Surrey to Close After 50 Years

-

Science8 months ago

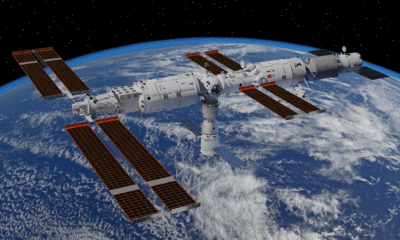

Science8 months agoChina’s Wukong Spacesuit Sets New Standard for AI in Space